Deep Video Portraits

Hyeongwoo Kim1 Pablo Garrido2 Ayush Tewari1 Weipeng Xu1 Justus Thies3

Matthias Nießner3 Patrick Pérez2 Christian Richardt4 Michael Zollhöfer5 Christian Theobalt1

1 MPI Informatik 2 Technicolor 3 TU Munich 4 University of Bath 5 Stanford University

ACM Transactions on Graphics (Proceedings of SIGGRAPH 2018)

Abstract

We present a novel approach that enables photo-realistic re-animation of portrait videos using only an input video. In contrast to existing approaches that are restricted to manipulations of facial expressions only, we are the first to transfer the full 3D head position, head rotation, face expression, eye gaze, and eye blinking from a source actor to a portrait video of a target actor. The core of our approach is a generative neural network with a novel space-time architecture. The network takes as input synthetic renderings of a parametric face model, based on which it predicts photo-realistic video frames for a given target actor. The realism in this rendering-to-video transfer is achieved by careful adversarial training, and as a result, we can create modified target videos that mimic the behavior of the synthetically-created input. In order to enable source-to-target video re-animation, we render a synthetic target video with the reconstructed head animation parameters from a source video, and feed it into the trained network – thus taking full control of the target. With the ability to freely recombine source and target parameters, we are able to demonstrate a large variety of video rewrite applications without explicitly modeling hair, body or background. For instance, we can reenact the full head using interactive user-controlled editing, and realize high-fidelity visual dubbing. To demonstrate the high quality of our output, we conduct an extensive series of experiments and evaluations, where for instance a user study shows that our video edits are hard to detect.

Downloads

- Paper preprint (PDF, 5 MB)

- Press release (University of Bath, 17 August 2018)

- Supplemental video (MP4, 228 MB)

- Presentation slides (PowerPoint, 382 MB)

- This paper on arXiv (arXiv:1805.11714)

- GVV project website (additional information)

Copyright

© Copyrights by the Authors, 2018. This is the authors’ version of the work. It is posted here for your personal use. Not for redistribution. The definitive version will be published in ACM Transactions on Graphics.

Please note — Important information about our work

Goal:

In this project, our aim is to demonstrate the capabilities of modern computer vision and computer graphics technology, which rest on advanced techniques for scene reconstruction and image synthesis, and convey them in an approachable and fun way. We also discuss how they serve as a building block for creative applications, for instance in content creation for VFX, video production and postprocessing, or telepresence and virtual and augmented reality.

Context:

We want to emphasize that computer-generated videos have been an integral part of feature-film movies for over 30 years. Virtually every high-end movie production contains a significant percentage of computer-generated imagery, or CGI, from Lord of the Rings to Benjamin Button. These results are hard to distinguish from reality and it often goes unnoticed that this content is not real. Thus, the synthetic modification of video clips was already possible for a long time, but the process was time-consuming and required domain experts. The production of even a short synthetic video clip costs millions in budget and multiple months of work, even for professionally trained artists, since they have to manually create and animate vast amounts of 3D content.

Progress:

Over the last few years, approaches have been developed which enable the creation of realistic synthetic content based on much less input, for example, based on a single video of a person or a collection of photos. With these approaches, much less work is required to synthetically create or modify a video clip. This makes these approaches accessible to a broader non-expert audience.

Applications:

These new and more efficient ways of editing video open up many interesting applications in computer graphics or even virtual and augmented reality. There are many possible and creative use cases for our technology, which is the motivation for us to develop it.

One important use case is in post-production systems in the film industry, where it is very common that scenes have to be edited after they were shot. With an approach like the one we present, even the positioning of an actor’s head and their facial expression can be edited to better match the intended framing of a scene. Much of this is already done today, but with much greater, often manual, necessary effort. It is a very difficult task to edit the pose of a dynamic object in a scene such that it is plausible, the modification is space-time coherent over all video frames, and the occluding / disoccluding scene content is correctly filled in or removed.

Video production is very different and also much more complex than photography in several ways. For instance, it requires proper planning of timing, actor position, or head orientation in a scene in order to create proper framings and camera angles of scenes, such as conversation scenes, and in order to tell a story most convincingly. An algorithm like ours would enable correction of scene composition errors in already filmed video footage, and therefore make post-processing easier.

Another use case is dubbing. Dubbing is a post-production step used in filmmaking to replace the voice of an original actor by the voice of a dubbing actor that speaks in another language. Production-level dubbing requires well-trained dubbers and extensive manual interaction. A good temporal synchronization of audio and video is mandatory, since viewers are very sensitive to discrepancies between the auditory and visual channel. Unfortunately, despite the best possible synchronization of dubbed voice to video, there will always be a disturbing mismatch between the actor and the new voice. With existing dubbing techniques, this fundamental discrepancy cannot be solved. Approaches such as ours enable to directly adapt the visual channel and the actor’s facial expressions to the new audio track, which can help to reduce these discrepancies. We believe that our technique might also pave the way to live translation and dubbing in a teleconferencing scenario.

These are just a few examples of highly creative use cases for our technology. Other possible applications are in video conferencing, where the technique can correct the eye gaze and head pose, such that the person actually looks into the eyes of the person at the other end, while looking at their own screen (remember that cameras and screens are usually in different locations, which causes this problem). Our approach could further be used in the context of a virtual mirror (virtual make-up/hairstyle). Furthermore, it could be used in the context of algorithmic head-mounted display (HMD) removal for VR teleconferencing, where the HMD could be edited out to enable natural communication without the display device in the way. Many of these creative uses cases were already shown in some of our previous research that we conducted with our international research partners, for instance, see the project pages of HeadOn, FaceVR and VDub.

Misuses:

Unfortunately, besides the many positive and creative use cases, such technology could also be misused. For example, videos could be modified with malicious intent, for instance in a way which is disrespectful to the person in a video. Currently, the modified videos still exhibit many artifacts, which makes most forgeries easy to spot. It is hard to predict at what point in time such modified videos will be indistinguishable from real content to our human eyes. However, as we discuss below, even then modifications can still be detected by algorithms.

Implications:

As researchers, it is our duty to show and discuss both the great application potential, but also the potential misuse of a new technology. We believe that all aspects of the capabilities of modern video modification approaches have to be openly discussed. We hope that the numerous demonstrations of our approach will also inspire people to think more critically about the video content they consume every day, especially if there is no proof of origin. We believe that the field of digital forensics should and will receive a lot more attention in the future to develop approaches that can automatically prove the authenticity of a video clip. This will lead to ever better approaches that can spot such modifications even if we humans might not be able to spot them with our own eyes (see comments below).

Detection:

The recently presented systems demonstrate the need for ever-improving fraud detection and watermarking algorithms. We believe that the field of digital forensics will receive a lot of attention in the future. Consequently, it is important to note that the detailed research and understanding of the algorithms and principles behind state-of-the-art video editing tools, as we conduct it, is also the key to develop technologies which enable the detection of their use. This question is also of great interest to us. The methods to detect video manipulations and the methods to perform video editing rest on very similar principles. In fact, in some sense, the algorithm to detect the Deep Video Portraits modification is developed as part of the Deep Video Portraits algorithm. Our approach is based on a conditional generative adversarial network (cGAN) that consists of two subnetworks: a generator and a discriminator. These two networks are jointly trained based on opposing objectives. The goal of the generator is to produce videos that are indistinguishable from real images. On the other hand, the goal of the discriminator is to spot the synthetically generated video. During training, the aim is to maintain an equilibrium between both networks, i.e., the discriminator should only be able to win in half of the cases. Based on the natural competition between the two networks and their tight interplay, both networks become more sophisticated at their task.

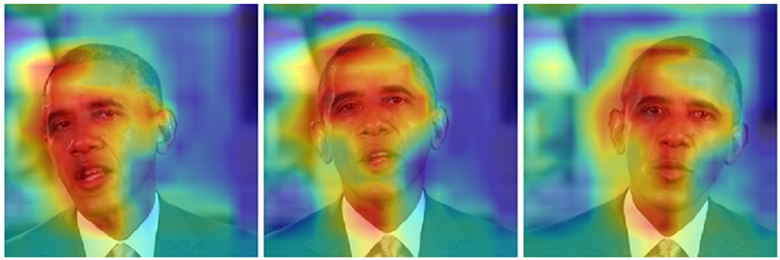

Note that detection (binary classification) is, in general, an easier problem than image generation, which means that it will always be possible to train a highly accurate detector given any specific image forgery approach. We conducted several experiments along those lines that show – despite the fact that the video modifications become increasingly imperceptible to the human eye – we can always train very effective discriminators to detect such modifications. The following is an example of such a network that is able to clearly detect the Deep Video Portraits modifications on this video sequence. The network can even inform the user where it “looks” to make its decision (known as an ‘attention map’), for example for the following three modified frames:

Our first results are also supported by a much larger recent study on forgery detection.

Many techniques that are needed to ensure video authenticity – and to clearly mark videos with modifications – already exist; others should be continuously refined alongside new video editing tools. We believe that in the future, research about the many creative applications of video editing can and has to be flanked with continuously improved methods for forgery detection – ideally in tandem. Research like ours builds the methodical backbone for both. Software companies intending to provide advanced video editing capabilities commercially could clearly watermark each video that was edited and even denote clearly – as part of that watermark – what part and element of the scene was modified. No creative user of such techniques would object to such watermarking.

Through our basic research, we will in the future further contribute to both the creative applications and the prevention of malicious use of such technology. In another strand of our research, we also investigate questions of personal privacy in community image collections and show that image editing tools can be used to increase the personal privacy of people on community photo platforms.

Summary:

To summarize, new video editing techniques will – in the future – enable great new creative and interactive applications that everyday users can enjoy. At the same time, the general public has to be aware of the capabilities of modern technology for video generation and editing. This will enable them to think more critically about the video content they consume every day, especially if there is no proof of origin. As we have shown, the technology to provide this proof of origin in the case of doubt can be developed and will be further improved in the future. Further basic research on the many applications and on technology to prove authenticity will thus be important in the future.

Bibtex

@article{DeepVideoPortraits,

author = {Hyeongwoo Kim and Pablo Garrido and Ayush Tewari and Weipeng Xu and Justus Thies and Matthias Nie{\ss}ner and Patrick P{\'e}rez and Christian Richardt and Michael Zollh{\"o}fer and Christian Theobalt},

title = {Deep Video Portraits},

journal = {ACM Transactions on Graphics},

year = {2018},

volume = {37},

number = {4},

pages = {163:1--14},

month = aug,

issn = {0730-0301},

doi = {10.1145/3197517.3201283},

url = {http://richardt.name/publications/deep-video-portraits/},

}