Dr. Christian Richardt

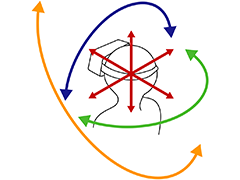

Christian Richardt is a Research Scientist at Meta Reality Labs in Zurich, Switzerland, and previously at the Codec Avatars Lab in Pittsburgh, USA. He was previously a Reader (=Associate Professor) and EPSRC-UKRI Innovation Fellow in the Visual Computing Group and the CAMERA Centre at the University of Bath. His research interests cover the fields of image processing, computer graphics and computer vision, and his research combines insights from vision, graphics and perception to reconstruct visual information from images and videos, to create high-quality visual experiences with a focus on novel-view synthesis.

Christian Richardt is a Research Scientist at Meta Reality Labs in Zurich, Switzerland, and previously at the Codec Avatars Lab in Pittsburgh, USA. He was previously a Reader (=Associate Professor) and EPSRC-UKRI Innovation Fellow in the Visual Computing Group and the CAMERA Centre at the University of Bath. His research interests cover the fields of image processing, computer graphics and computer vision, and his research combines insights from vision, graphics and perception to reconstruct visual information from images and videos, to create high-quality visual experiences with a focus on novel-view synthesis.

Christian was previously a postdoctoral researcher working on user-centric video processing and motion capture with Christian Theobalt at the Intel Visual Computing Institute at Saarland University and also in the Graphics, Vision and Video group at Max-Planck-Institut für Informatik in Saarbrücken, Germany. Previously, he was a postdoc in the REVES team at Inria Sophia Antipolis, France, working with George Drettakis and Adrien Bousseau, and he interned with Alexander Sorkine-Hornung at Disney Research Zurich where he worked on Megastereo panoramas.

Christian graduated with a PhD and BA from the University of Cambridge in 2012 and 2007, respectively. His PhD in the Computer Laboratory’s Rainbow Group was supervised by Neil Dodgson. His doctoral research investigated the full life cycle of videos with depth (RGBZ videos): from their acquisition, via filtering and processing, to the evaluation of stereoscopic display.

News

- June 2026

- My intern Ruofan Liang and I will be presenting our LuxRemix paper at CVPR 2026 in Denver, Colorado, USA.

- May 2026

- My PhD student Jundan Luo presented her final PhD paper ProjectiveShading at Eurographics 2026 in Aachen, Germany.

- March 2026

- We presented two papers at 3DV 2026 in Vancouver, Canada: MapAnything and Fillerbuster.

- December 2025

-

I was delighted to attend CVMP 2025 in London to give a keynote on Unleashing Immersive Spaces by Capturing, Reconstructing, and Rendering Reality.

Our intern Tzofi Klinghoffer presented his work Shoot-Bounce-3D at SIGGRAPH Asia 2025 in Hong Kong.

- October 2025

- We organised a workshop on Generative Scene Completion at ICCV 2025 in Honolulu, USA.

- June 2025

-

We presented five papers by our outstanding interns and collaborators at CVPR 2025 in Nashville: Time of the Flight of the Gaussians (oral), SoundsVista (highlight), IRIS, Geometry-guided Online 3D Video, and Volumetric Surfaces.

I also presented at the CVPR Tutorial on Volumetric Video in the Real World and the 2nd CVPR Workshop on Neural Fields Beyond Conventional Cameras.

- Mar 2025

- My PhD student Manuel Rey-Area presented his final PhD paper 360° 3D Photos from a Single 360° Input Image at IEEE VR 2025 in Saint-Malo, France.

- Dec 2024

- We presented URAvatar at SIGGRAPH Asia 2024 in Tokyo.

- Oct 2024

- We presented Flowed Time of Flight Radiance Fields (F-TöRF) at ECCV 2024 in Milan.

- June 2024

-

We presented five papers by our outstanding interns at CVPR 2024 in Seattle:

PlatoNeRF, HybridNeRF, SpecNeRF, Real Acoustic Fields, and ViewDiff.My PhD student Jundan Luo has two papers accepted: IntrinsicDiffusion (SIGGRAPH 2024) and CRefNet (TVCG 2024).

- November 2023

-

Three papers accepted:

1. Neural Feature Filtering for Faster Structure-from-Motion Localisation (BMVC 2023 in Aberdeen),

2. PyNeRF: Pyramidal Neural Radiance Fields (NeurIPS 2023 in New Orleans), and

3. VR-NeRF: High-Fidelity Virtualized Walkable Spaces (SIGGRAPH Asia 2023 in Sydney).Check out our new Eyeful Tower dataset, the highest-resolution, highest-quality, high-dynamic range, multi-view indoor scene dataset powering VR-NeRF.

- October 2023

- We presented one paper on Neural Fields for Structured Lighting at ICCV 2023 in Paris.

- June 2023

-

We held DynaVis, the Fourth International Workshop on Dynamic Scene Reconstruction at CVPR 2023 in Vancouver (afternoon of Monday 19 June).

We presented two papers at CVPR 2023 in Vancouver: HyperReel and Neural Duplex Radiance Fields for high-fidelity and real-time view synthesis, respectively.

- March 2023

- I was serving as an Area Chair for ICCV 2023.

- June 2022

- We presented our work on high-resolution 360° monocular depth estimation (360MonoDepth) at CVPR 2022 in New Orleans.

- April 2022

- I’m excited to join Reality Labs Research in Pittsburgh as a Research Scientist Lead.

Selected publications

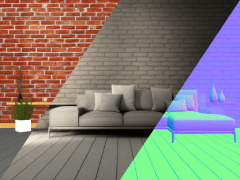

Ruofan Liang, Norman Müller, Ethan Weber, Duncan Zauss, Nandita Vijaykumar, Peter Kontschieder and Christian Richardt

Conference on Computer Vision and Pattern Recognition (CVPR) 2026

Jundan Luo, Xiaolong Wu, Nanxuan Zhao, Lu Wang, Wenbin Li and Christian Richardt

Computer Graphics Forum (Proceedings of Eurographics 2026)

Nikhil Keetha, Norman Müller, Johannes Schönberger, Lorenzo Porzi, Yuchen Zhang, Tobias Fischer, Arno Knapitsch, Duncan Zauss, Ethan Weber, Nelson Antunes, Jonathon Luiten, Manuel Lopez-Antequera, Samuel Rota Bulò, Christian Richardt, Deva Ramanan, Sebastian Scherer and Peter Kontschieder

International Conference on 3D Vision 2026

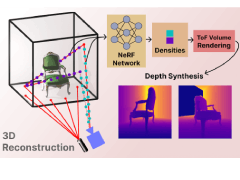

Runfeng Li, Mikhail Okunev, Zixuan Guo, Anh Ha Duong, Christian Richardt, Matthew O’Toole and James Tompkin

CVPR 2025 (oral, 0.7% acceptance rate)

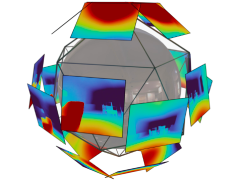

Manuel Rey-Area and Christian Richardt

IEEE Transactions on Visualization and Computer Graphics (IEEE VR 2025)

Jundan Luo, Duygu Ceylan, Jae Shin Yoon, Nanxuan Zhao, Julien Philip, Anna Frühstück, Wenbin Li, Christian Richardt and Tuanfeng Y. Wang

SIGGRAPH 2024

Tzofi Klinghoffer, Xiaoyu Xiang, Siddharth Somasundaram, Yuchen Fan, Christian Richardt, Ramesh Raskar and Rakesh Ranjan

CVPR 2024 (oral/award candidate, 0.8%/0.2% acceptance rate)

Haithem Turki, Vasu Agrawal, Samuel Rota Bulò, Lorenzo Porzi, Peter Kontschieder, Deva Ramanan, Michael Zollhöfer and Christian Richardt

CVPR 2024 (highlight, 2.8% acceptance rate)

Li Ma, Vasu Agrawal, Haithem Turki, Changil Kim, Chen Gao, Pedro V. Sander, Michael Zollhöfer and Christian Richardt

CVPR 2024 (highlight, 2.8% acceptance rate)

Linning Xu, Vasu Agrawal, William Laney, Tony Garcia, Aayush Bansal, Changil Kim, Samuel Rota Bulò, Lorenzo Porzi, Peter Kontschieder, Aljaž Božič, Dahua Lin, Michael Zollhöfer and Christian Richardt

SIGGRAPH Asia 2023

Benjamin Attal, Jia-Bin Huang, Christian Richardt, Michael Zollhöfer, Johannes Kopf, Matthew O’Toole and Changil Kim

CVPR 2023 (highlight, 2.6% acceptance rate)

Manuel Rey-Area*, Mingze Yuan* and Christian Richardt

Conference on Computer Vision and Pattern Recognition (CVPR) 2022

Benjamin Attal, Eliot Laidlaw, Aaron Gokaslan, Changil Kim, Christian Richardt, James Tompkin and Matthew O’Toole

Advances in Neural Information Processing Systems (NeurIPS) 2021

Tobias Bertel, Mingze Yuan, Reuben Lindroos and Christian Richardt

ACM Transactions on Graphics (Proceedings of SIGGRAPH Asia 2020)

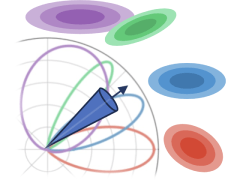

Thu Nguyen-Phuoc, Christian Richardt, Long Mai, Yong-Liang Yang and Niloy Mitra

Advances in Neural Information Processing Systems (NeurIPS) 2020

Christian Richardt, James Tompkin and Gordon Wetzstein

Book chapter in Real VR – Immersive Digital Reality, Springer 2020

Thu Nguyen-Phuoc, Chuan Li, Lucas Theis, Christian Richardt and Yong-Liang Yang

International Conference on Computer Vision (ICCV) 2019

George Koulieris, Kaan Akşit, Michael Stengel, Rafał K. Mantiuk, Katerina Mania and Christian Richardt

Computer Graphics Forum (Eurographics 2019 State-of-the-Art Report)

Christian Richardt, Yael Pritch, Henning Zimmer and Alexander Sorkine-Hornung

Proceedings of CVPR 2013 (oral presentation, 3.3% acceptance rate)